We’re all (well, at least I am) getting pretty excited by what we see with ChatGPT and other large language models - it’s amazing progress in a ridiculously short space of time.

For example, I recently used it to generate code for Arduino projects - and it produced a working project - amazing!

But, a lot of people take great pleasure in pointing out that it often seems to make things up. It can have quite an interesting relationship with the “truth”.

There was a really interesting post on HackerNews recently that linked to a great paper - Playing Games with Ais: The Limits of GPT-3 and Similar Large Language Models - and reading this gave me a bit of a lightbulb moment.

Large Language Models (LLMs) are great at generating plausible text - but they’re not necessarily great at generating truthful text. They are learning the structure of language, not the facts of the world.

There’s a great example in the paper where they ask GPT to continue the statement John Prescott* was born in…

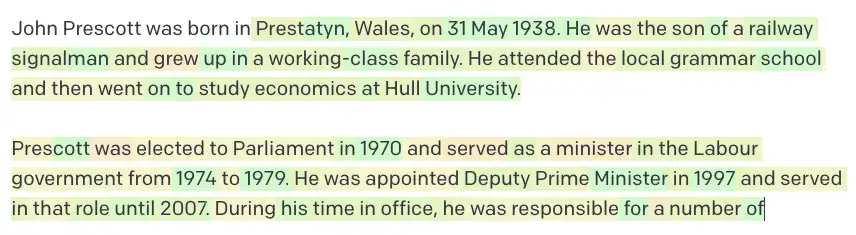

When they tried this, GPT-3 generated the following completion:

in Hull on June 8th 1941.

This is a perfectly plausible answer - but it’s not true. John Prescott was born in Prestatyn on 31st May 1938.

It’s not answering the question “Where and when was John Prescott born?” - it’s just creating the most plausible continuation of the statement.

And it knows that a continuation of this kind of statement should be a relevant town and date - but, it doesn’t necessarily know which town and which date it should use.

According to the paper, this is because GPT has not memorised all the facts about John Prescott - it’s compressed the semantic relationships about him.

Interestingly, when I tried the same prompts in the OpenAI playgrounds - the davinci model produced an accurate answer:

The currie model made up some information:

The babbage model really went to town:

And the ada model also produced a plausible but incorrect answer:

ChatGPT produced this:

John Prescott was born on May 31, 1938 in Prestatyn, Denbighshire, Wales. He is a British politician who served as the Deputy Prime Minister of the United Kingdom from 1997 to 2007, under Prime Minister Tony Blair. He was also the Member of Parliament for Hull East from 1970 to 2010, and served as the President of the Board of Trade, the Secretary of State for the Environment, and the Secretary of State for Transport.

It’s correct - and includes some nice extra details - pretty similar performance to the davinci model.

However, as I’m writing this I’ve just realised that I blindly accepted the additional information as true. I didn’t even bother to check it! (it is true - just checked in on Wikipedia…).

This highlights the danger, as humans, we seem quite susceptible to believing things that seem plausible. This is probably quite an important part of just getting through the day - we don’t have time to check everything we read or hear - so we have to make some assumptions about what is true and what is not.

So, what does this mean for us? Is it safe to use things like ChatGPT?

If you’re looking for factual answers - then you need to verify what comes out of these models. And let’s be honest you should be doing this with any source of information - we’ve come to assume that what we get from a Google or Wikipedia article must be true - but maybe we should be a bit more careful.

What we should avoid:

- Getting factual answers and blindly trusting them

- Trying to solve maths problems - for the love of everything that is holy stop trying this and then posting about how it failed

- Anything that involves deep reasoning and deduction - it’s not a human brain

Things that I think are great for ChatGPT are:

- Creating marketing copy - with a human reviewer in the loop

- Generating code - but check that the APIs it suggests are real

- Finding problems in code

- Summarising code/text

- Rubber ducking - talking to a computer can be a great way to work through a problem

- Providing inspiration for ideas

- And many many others…

As always - this is a constantly moving target - and I’m sure that it won’t be long before we have an intelligent library computer at our fingertips.

*John Prescott

John Prescott was quite a famous politician in the UK. He was Deputy Prime Minister from 1997 to 2007. He was also the Member of Parliament for Hull East from 1970 to 2010, and served as the President of the Board of Trade, the Secretary of State for the Environment, and the Secretary of State for Transport (this was actually filled in for me by Copilot - and yes, I have fact checked it!).

They don’t make them like that anymore…